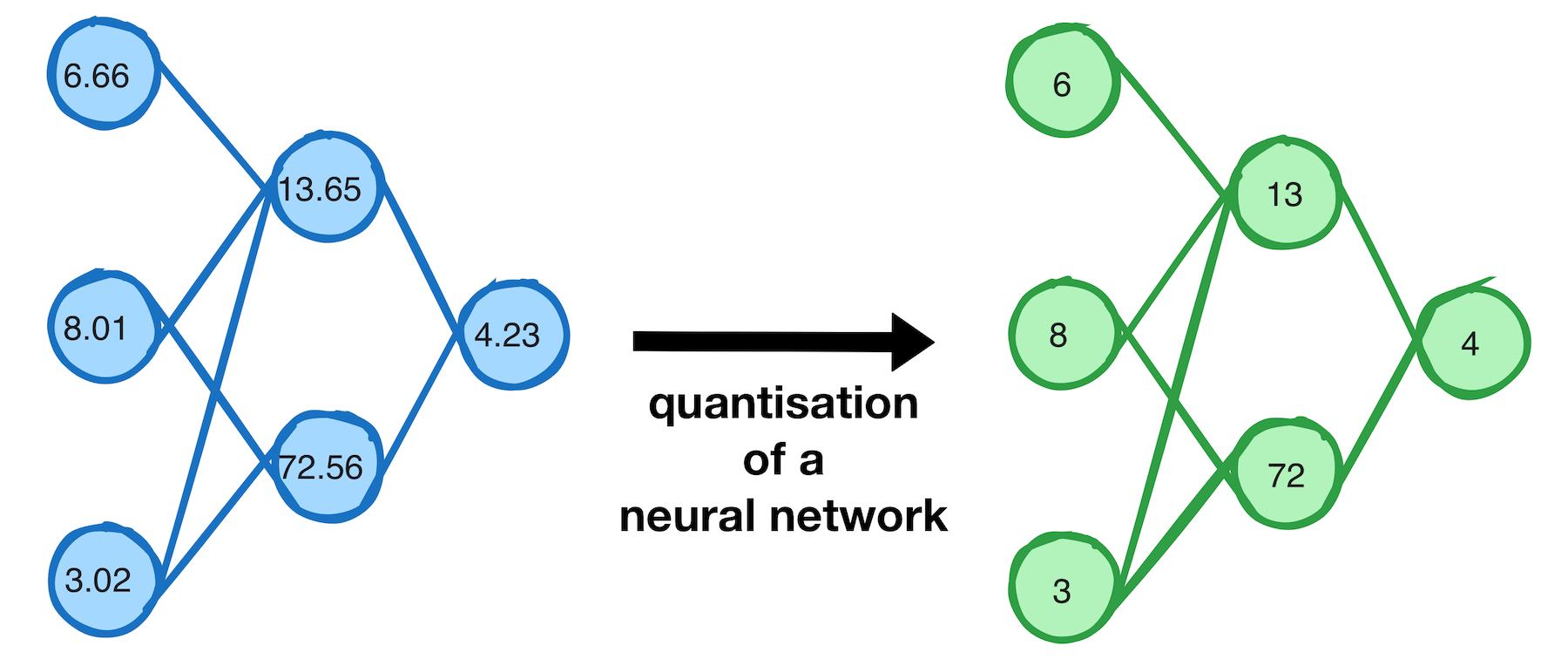

Patching NVIDIA's driver and vLLM to enable P2P on consumer GPUs

NVIDIA artificially restricts peer-to-peer (P2P) GPU communication to their enterprise cards. Turns out this is a software limitation, not a hardware one. I patched my drivers to remove it, hacked vLLM to take advantage of it, and got a 15-50% throughput improvement running Qwen 3.5 35b on dual RTX 3090s.