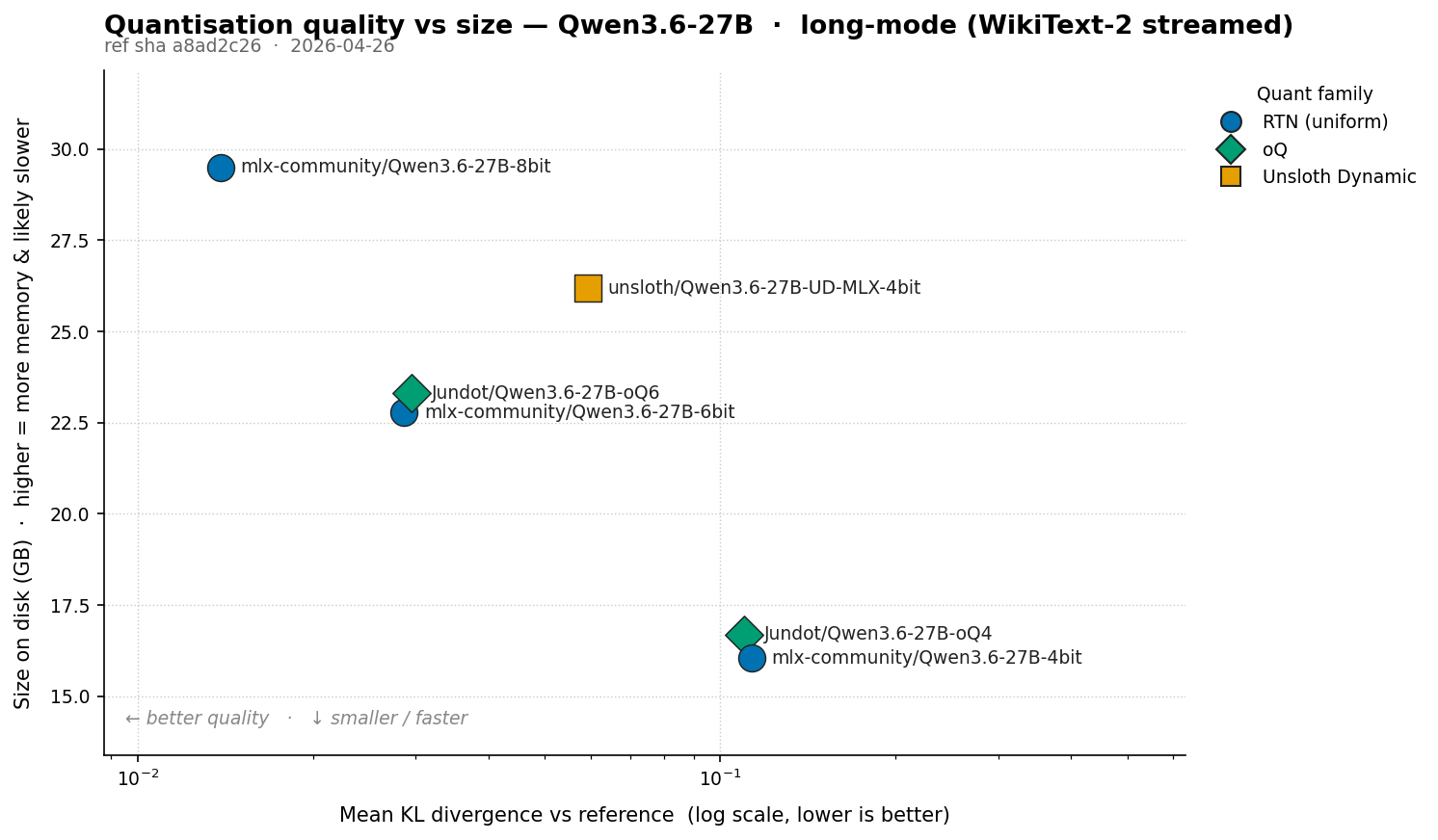

Measuring Model Quantisation Quality with KL Divergence

KL divergence against a known-good reference answers “how much did this quant change the model’s behaviour?” rather than “how good is this model overall?”. What KLD measures KL divergence measures how much one probability distribution disagrees with another. At each token position, both the reference and the quantised model emit a distribution over the full vocabulary (~248k tokens for Qwen-class models). The reference might say "~80% likely the, ~5% likely a, …"; the quant says something slightly different. KLD compares the two per position and averages. ...

New Apple Silicon M4 & M5 HiDPI Limitation on 4K External Displays

Starting with the M4 and including the new M5 generations of Apple Silicon, macOS no longer offers or allows full-resolution HiDPI 4k modes for external displays. The maximum HiDPI mode available on a 3840x2160 panel is now just 3360x1890 - M2/M3 machines did not have this limitation. With this regression Apple is leaving users to choose between: Full screen real estate at 4k (3840x2160) with blurry text due to HiDPI being disabled. or ...

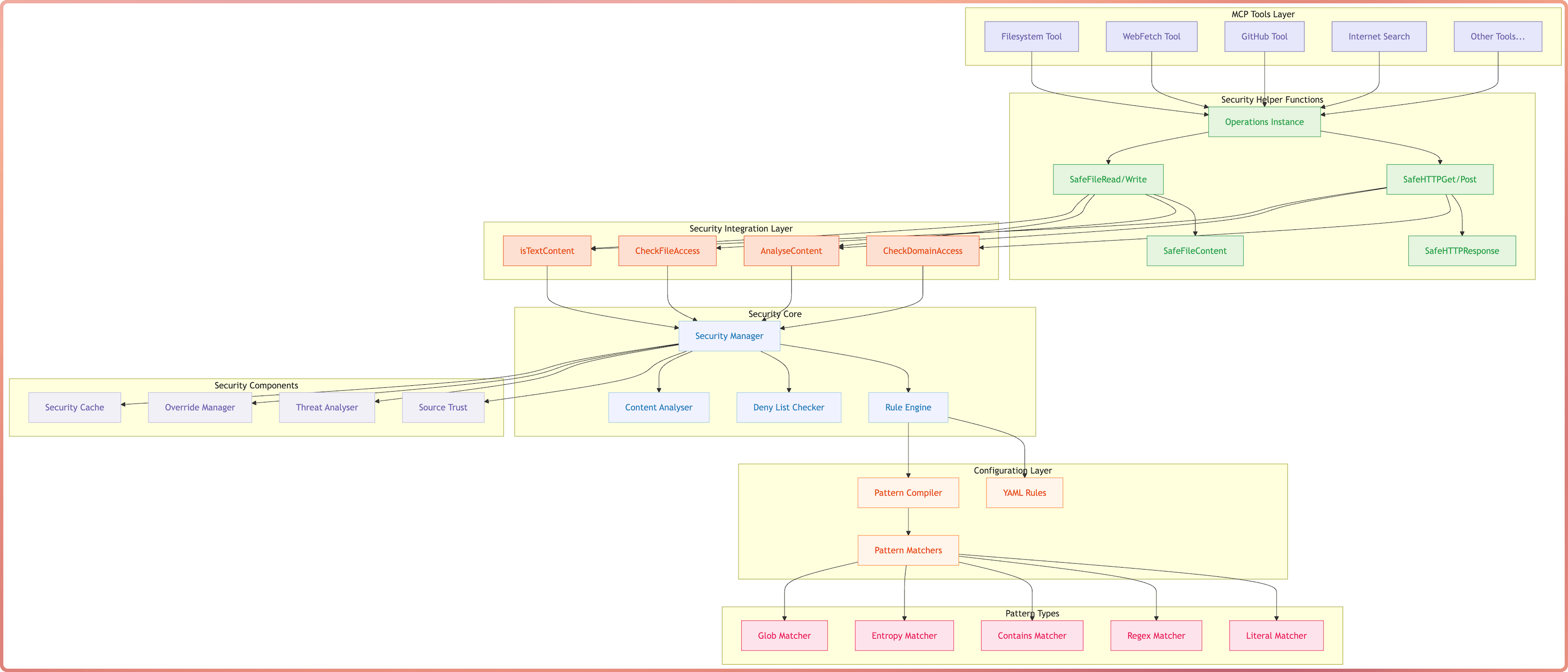

The advice I find myself repeating every time someone asks how to get started with Claude Code

Configuration Agent rules - concise, scoped CLAUDE.md files that shape agent behaviour Sandboxing - constrain file access and network connections Permissions - pre-approve safe operations, hard-block dangerous ones Hooks - run shell commands before/after tool calls as a safety net Extend knowledge and capabilities Skills - dynamic knowledge acquisition with progressive disclosure Language servers - give the agent go-to-definition, find-references, and type checking MCP tools - external tool servers, used sparingly Workflow Plan before acting - read-only exploration and task definition Embrace starting fresh sessions - keep context clean Template out common commands - reusable prompts for common tasks ...

Patching NVIDIA's driver and vLLM to enable P2P on consumer GPUs

NVIDIA artificially restricts peer-to-peer (P2P) GPU communication to their enterprise cards. Turns out this is a software limitation, not a hardware one. I patched my drivers to remove it, hacked vLLM to take advantage of it, and got a 15-50% throughput improvement running Qwen 3.5 35b on dual RTX 3090s.

The Role Bridging Problem

An observation on functional correctness without domain quality.

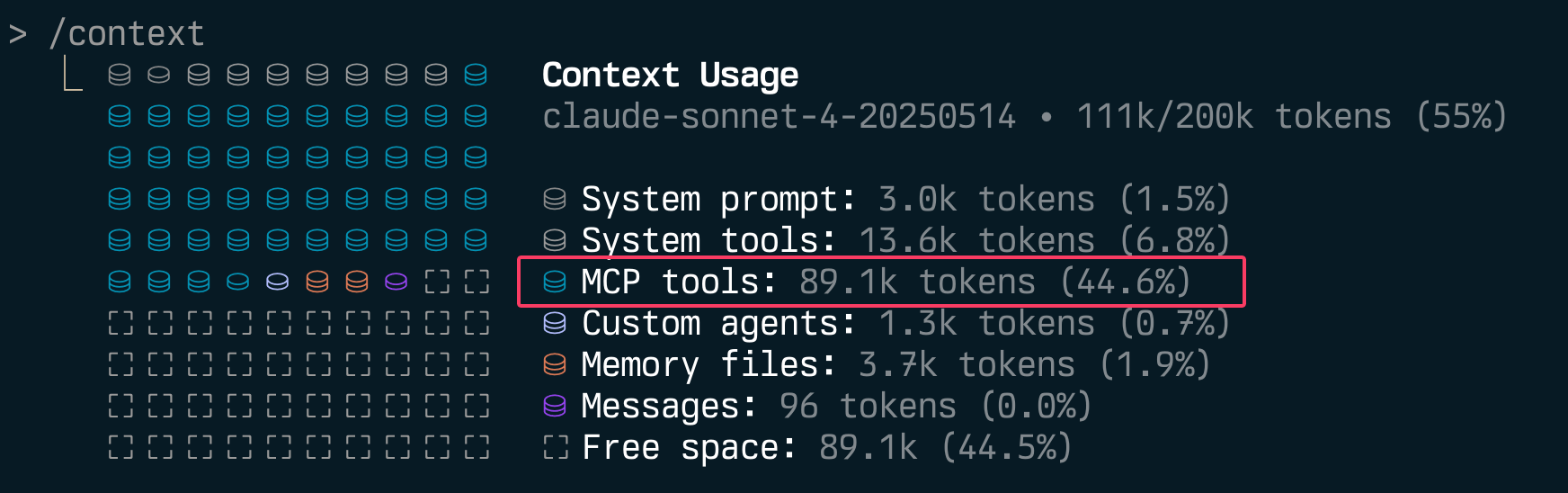

Stop Polluting Context - Let Users Disable Individual MCP Tools

If you’re building MCP servers, you should be adding the ability to disable individual tools.

MCP DevTools

MCP DevTools - The one tool that replaced the 10-15 odd NodeJS/Python/Rust MCP servers I had running at any given to for agentic coding tools with a single server that provides tools I consider useful for agents when coding. The Problem The MCP ecosystem has grown rapidly, but I found myself managing many separate servers, each often running multiple times for every MCP client I had running, not to mention the ever growing memory and CPU consumption of the many NodeJS or Python processes. ...

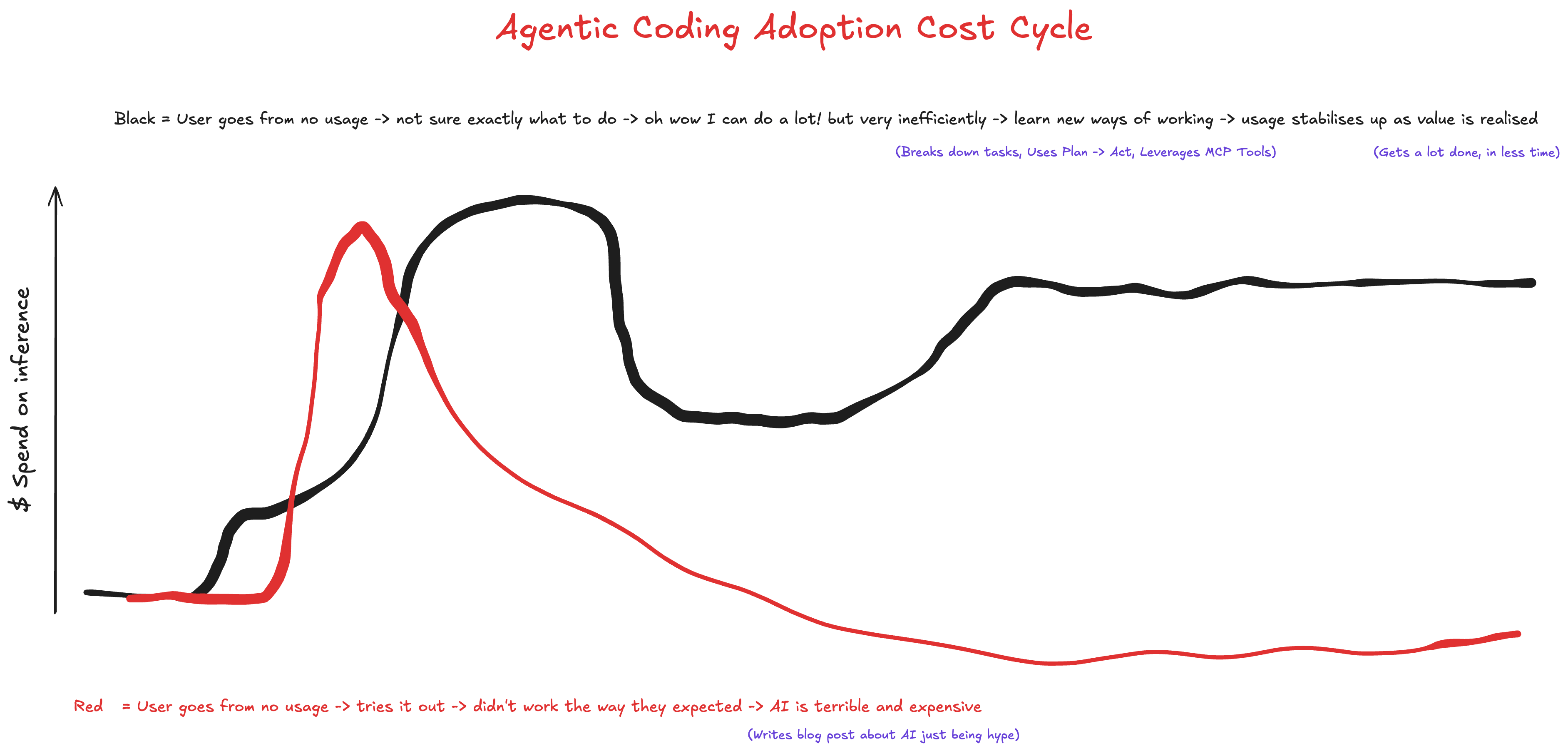

Agentic Coding Adoption Cost Cycle

Agentic Coding Workflow & Cline Demo

Square Peg hosted event on June 20, 2025 where I demonstrated a basic version of my daily Agentic Coding workflow using Cline and MCP tools. What does it take to write enterprise-grade code in the AI-native era? Join Square Peg investors James Tynan and Grace Dalla-Bona for a live demo and Q&A session with three leading AI-native developers - Grant Gurvis, Listiarso Wastuargo, and Sam McLeod - and get a behind-the-curtain look at the workflows that enable them to ship faster, smarter, and cleaner code using tools like Cursor, Cline, and smolagents. ...

Vibe Coding vs Agentic Coding

Picture this: A business leader overhears their engineering team discussing “vibe coding” and immediately imagines developers throwing prompts at ChatGPT until something works, shipping whatever emerges to production. The term alone—“vibe coding”—conjures images of seat-of-the-pants development that would make any CTO break out in a cold sweat. This misunderstanding is creating a real problem. Whilst vibe coding represents genuine creative exploration that has its place, the unfortunate terminology is causing some business leaders to conflate all AI-assisted / accelerated development with haphazard experimentation. I fear that engineers using sophisticated AI coding agents be it with advanced agentic coding tools like Cline to deliver production-quality solutions are finding their approaches questioned or dismissed entirely. ...